Building a simple backup solution for Hyper-V-based virtual machines using OneDrive for Business and PowerShell

At home, I run several PCs – a few laptops, and one workstation which is a bit more performant, so that I can run Hyper-V and virtual machines on it. It’s also my server for Plex, the amazing multimedia system that effectively allows me to have my own private Netflix wherever I go.

At the moment, my server runs on Windows 10 Pro for Workstations, which is the special edition for high-performance PCs. Among others, it provides a new power plan named Ultimate Performance, for a little bit of added push. It also supports Hyper-V, so I can comfortably run virtual machines locally instead of relying on Azure for everything.

I run my VMs off from two disks – one is a single 1 TB SSD, and another is a mirrored (RAID-1, for you old-schoolers) 512 GB M.2 SSD. They are both more than fast enough for me, so I run more important VMs from the mirrored drive, and test VMs from the single drive.

Business problem

The real problem I’ve had is backups. I backup everything thrice usually:

- to Azure Storage

- to external USB HDD

- to my Synology NAS (read here how I built the Synology –> Azure connection)

This works great! I get daily notifications when backups are completed, and I regularly test restoring content from all three media to ensure I’m good.

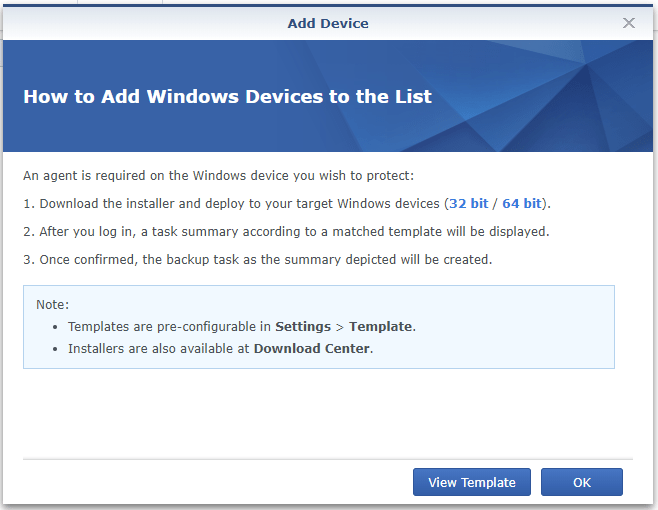

Synology also supports backing up VMs, via an app called Active Backup for Business.

It supports backing up VMs directly via an agent, which I haven’t bothered installing (yet). I’ve been relying on a lightweight backup approach through file shares – Synology reaches out to my D$ and E$ admin shares and picks up what it can.

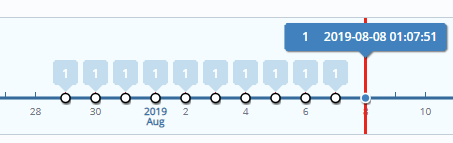

I realize, this isn’t optimal. Also, it might not ensure data consistency, as some of my VMs might be happily performing tasks while they’re being backed up without an agent. Synology also provides a fancy restore portal to manage backups and

restore operations:

Unfortunately, as I’m running a workstation-variant of Windows 10, I’m unable to employ Volume Shadow Copy for snapshot-based backups.

So, I decided that while I’m finding the time to properly test Synology’s own backup agent, perhaps it’s time to fix my VM backups some other way.

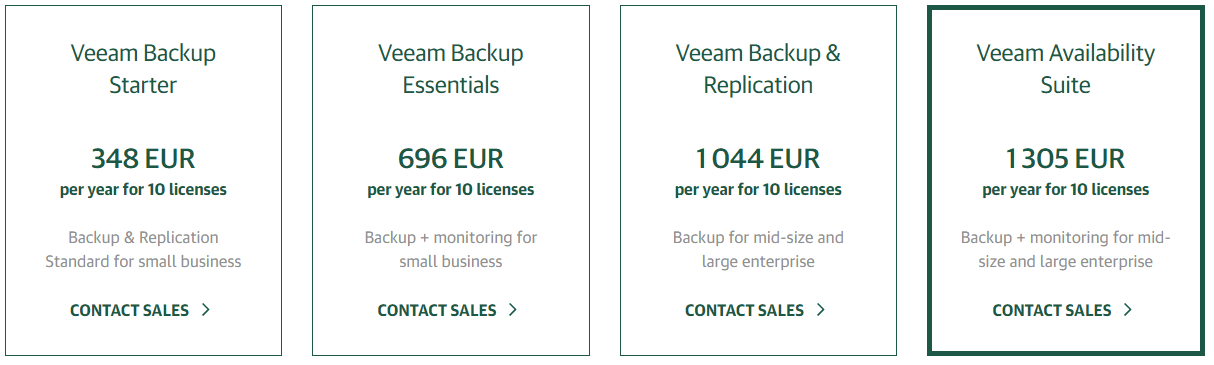

Veeam provides a solid backup solution for Hyper-V, but looking at pricing I knew I didn’t have a need for such an enterprise solution right now.

One could argue, that the cheapest option (348 € per year) is still very affordable, which it is. But perhaps I could build something quickly on my own, and then later figure out a proper backup method with Synology, Veeam or some other vendor?

Building the solution

Building out the solution proved to be quite easy. I have several options to choose for storage:

- Synology NAS, meaning local storage quickly accessible and quite affordable

- Azure Storage, very affordable but requires a bit of tinkering for direct writes

- OneDrive for Business, with 5 TB of paid-for storage through the paid subscription plan

I chose to try out OneDrive for Business, as I’m using it a bit to share certain content externally. I also knew that manipulating Hyper-V is easiest with PowerShell.

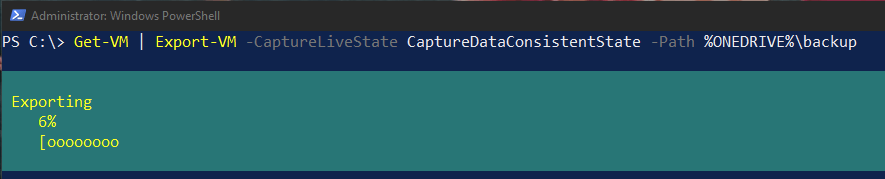

I started with one simple command to test my hypothesis of easy, and affordable backups for Hyper-V:

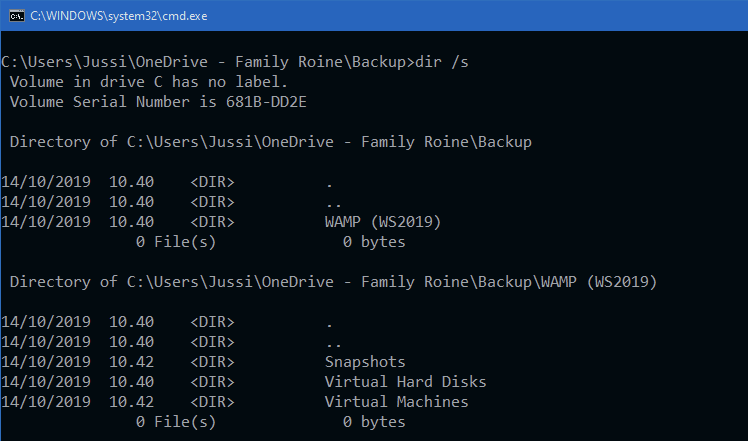

Get-VM | Export-VM -CaptureLiveState CaptureDataConsistentState -Path %ONEDRIVE%\backupAnd it just works!

Obviously, this simple oneliner is missing out on a lot of things. What if the VM is running? The Export-VM cmdlet supports different capture modes for the live state. I chose to use -CaptureDataConsistentState, which uses Production Checkpoint technology. This, in turn, uses Volume Shadow Copy but leaves out the memory state of the VM. It’s also the default checkpoint type for Hyper-V.

To further ensure I’m capturing all relevant data with my script, I could turn off all VMs first by adding the following command as the first line in my first:

Get-VM | Stop-VMThis gracefully stops (shuts down) all VMs, and the ones that are stopped will remain stopped. After the backup is complete, they don’t restart automatically, however. I’m fine with this, as I want to keep the solution super simple, and all of my VMs are for testing, hacking around and just for myself – so I can easily spin them up at a moment’s notice when I need to. There are a number of scripts available for this, such as this, this, and this.

How about OneDrive for Business then? I’m referencing the path through a system environment variable named %ONEDRIVE%. There’s another called %ONEDRIVECOMMERCIAL%, which points to OneDrive for Business. These both point to the same directory, as I’m not using any other OneDrive services.

A limitation from OneDrive’s side is the maximum file size. According to the latest official guidance, individual files cannot exceed 15 GB in size. This will turn out to be a problem, as some of my Hyper-V files are up to 19 GB in size. I’m not sure what will happen then – perhaps OneDrive will simply not synchronize them.

In summary

I’ve used this solution for about a week now, and it’s simple and it works. It’s far from robust, though – there are a number of components, should they fail and my backups do not complete. The obvious challenge is that certain VMs are too big for OneDrive for Business to support. For this, I’m thinking of either replacing my backup destination with Synology. or Azure Storage directly.

This was somewhat easy to build, and I enjoyed building something so quickly and still achieve something very useful!