Building a double-blind email distribution list using Azure Logic Apps and Exchange Online

I’ve recently worked with Azure Logic Apps a fair bit. Last night, I wanted to stretch my understanding of the Logic Apps service a bit further and started thinking about what would be a fun solution to build. I came up with an idea of a double-blind email distribution list.

What is a double-blind email distribution list?

Usually, with double-blind, the intention is to provide an anonymization approach, where participants do not see each other’s email addresses – yet still allowing them to communicate.

For example, if I want to email [email protected], using my email of [email protected], I want to keep my personal email address hidden. Thus, I need some sort of a proxy approach that acts as a remailer between the participants.

The solution

I spent about 45 minutes building the first draft of the service. I then tweaked it further and spent an additional hour to test and troubleshoot the solution. In the end, I was able to modify it to a very simplistic approach when initially I was thinking it as a too complex of a solution.

How it works, it simple:

- A user emails a given email address, such as [email protected]

- The subject of the first email tells the remailer who needs to be part of the distribution list

- The remailer hides the sender and recipient email addresses and provides a unique identifier for them to get connected

- Whenever someone emails [email protected] with the unique identifier, the email gets distributed to all participants – without them seeing each other’s email addresses

For the solution, I need only the following:

- A Exchange Online email account – such as [email protected]

- A Logic App

- A Storage Account for storing the messages and remailer information

How it works

I want to set up a double-blind email distribution list, so I email [email protected] with the following subject format:

[DB] [email protected]The prefix [DB] is a simple way to trigger my Logic App, and it can then pick up the recipient.

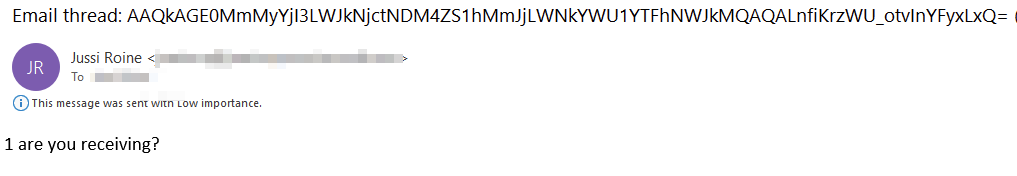

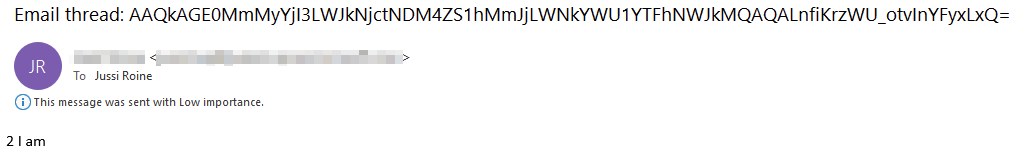

The recipient will receive an email that looks like this:

And should the recipient now want to reply through the double-blind service, they’ll only need to use the following format in the subject field:

[DB] REPLY <thread-id>And thread id is within the initial double-blinded email – AAQkA.. and so forth. All participants of the email thread will now receive these emails:

In essence, the thread id connects the participants, and a single Exchange Online email account connects them anonymously.

The Logic App

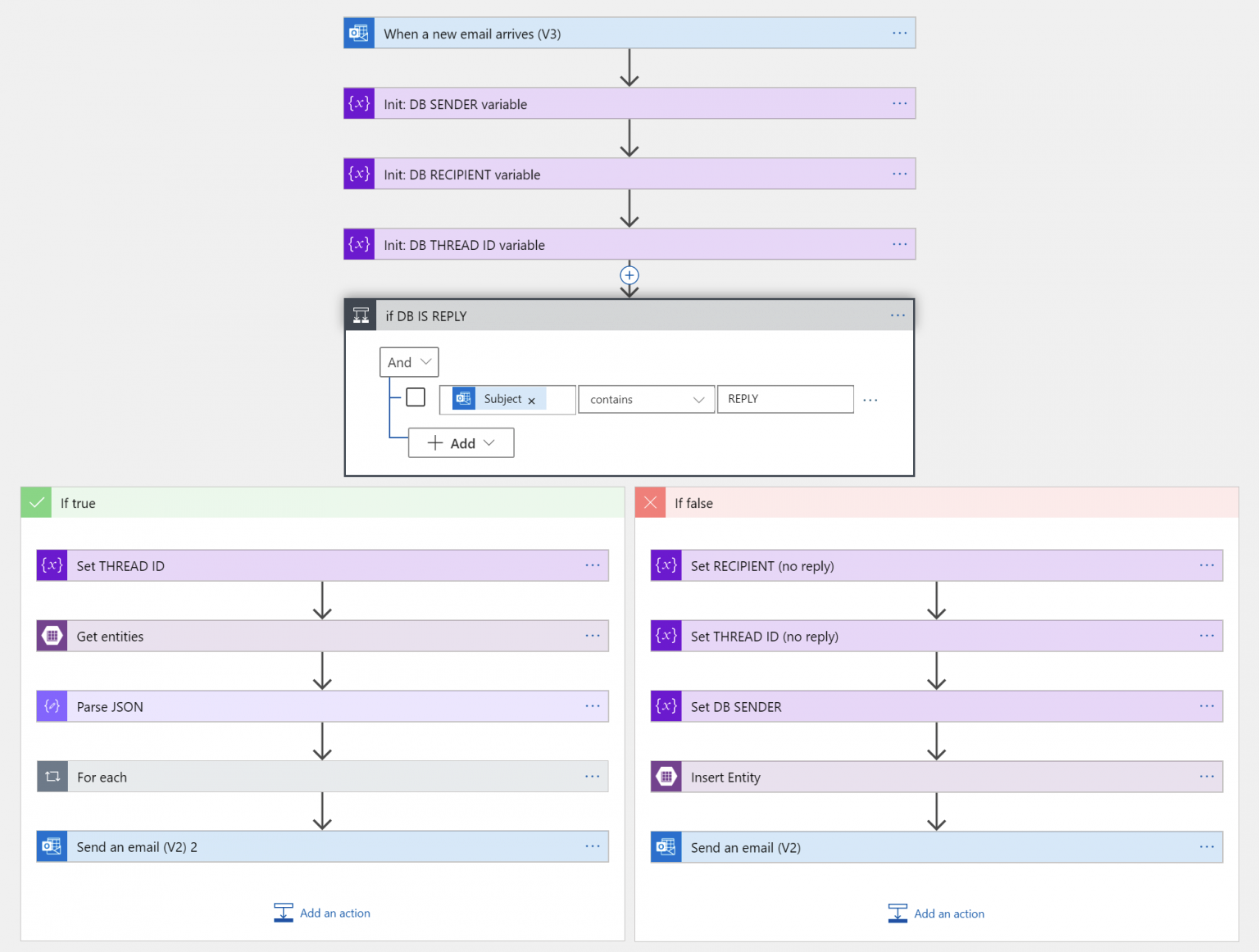

First, let’s take a look at the logic from a high level:

The Logic App gets triggered when a new email arrives:

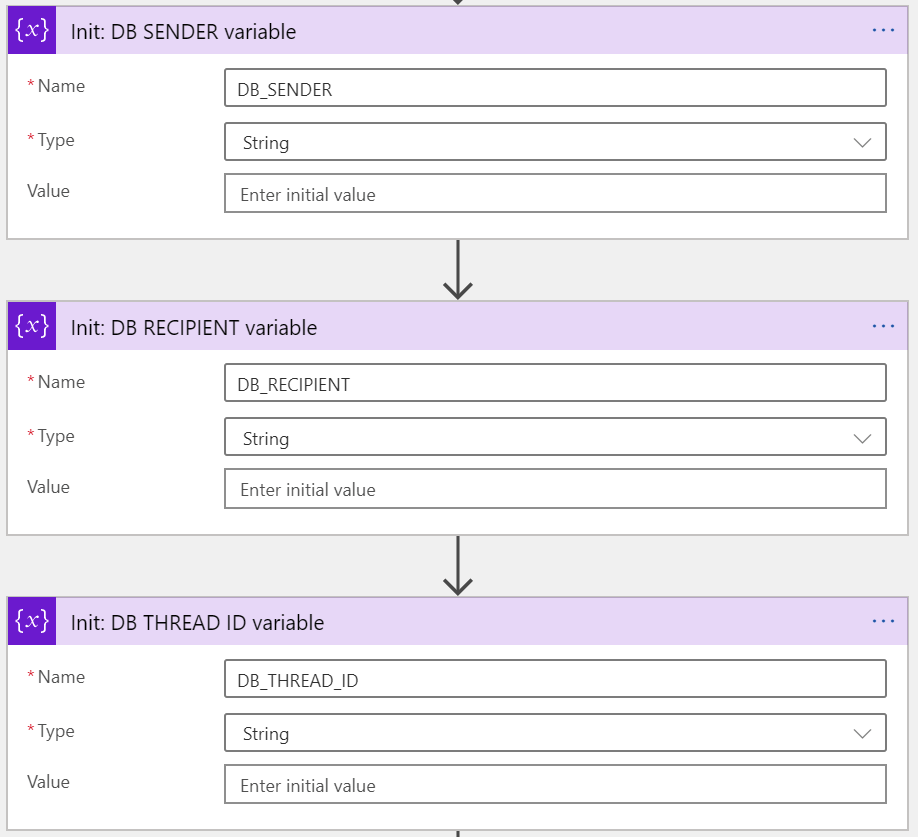

I’m filtering for all emails with [DB] to make it simpler to focus on the relevant messages. Next, I’ll initialize three variables:

- DB_SENDER: The original sender of the first email

- DB_RECIPIENT: The intended recipient(s)

- DB_THREAD_ID: The unique identifier to connect them all

They are all set to null, as we don’t have all the data yet.

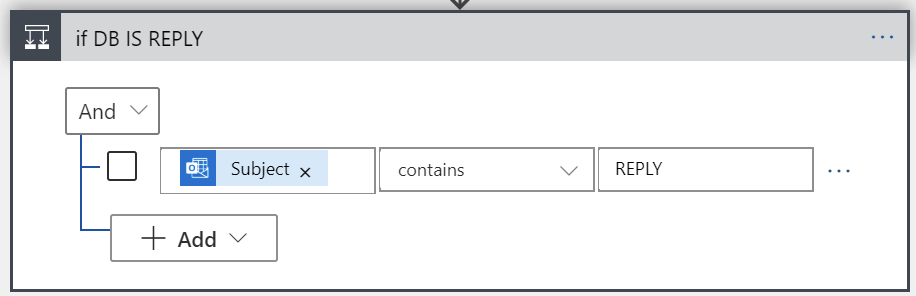

Next, I’ll check if this is a reply to a previous thread, or a new thread:

Thus, if the subject field conforms to the syntax of [DB] REPLY, we’ll treat this as a reply. Otherwise we can safely assume it’s the initial email, with the syntax of [DB] email-address. Let’s take a look at the latter first, so when someone initiates a new thread:

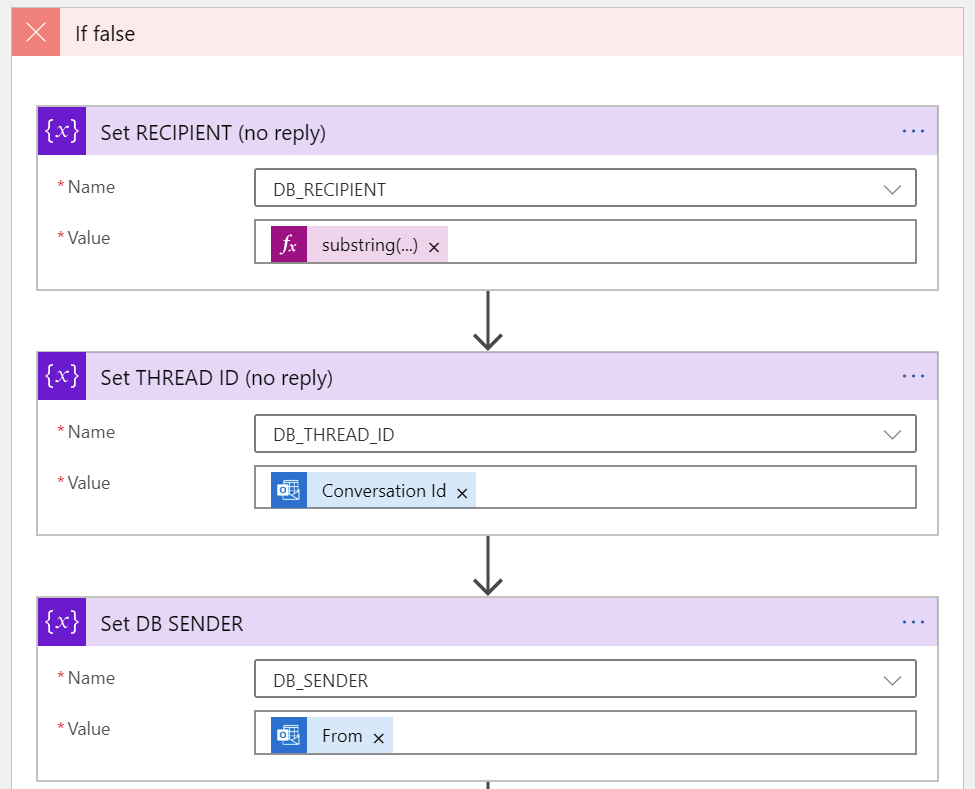

First, we’ll populate the three variables (DB_SENDER, DB_RECIPIENT and DB_THREAD_ID):

DB_RECIPIENT must be picked from the subject field with the Substring() function:

substring(triggerBody()?['subject'], 5)DB_THREAD_ID is interesting, as it needs to be unique. I’m using the Conversation Id field from Exchange Online for this. I couldn’t find a reference that this is guaranteed to be unique, but it’s unique.. enough.

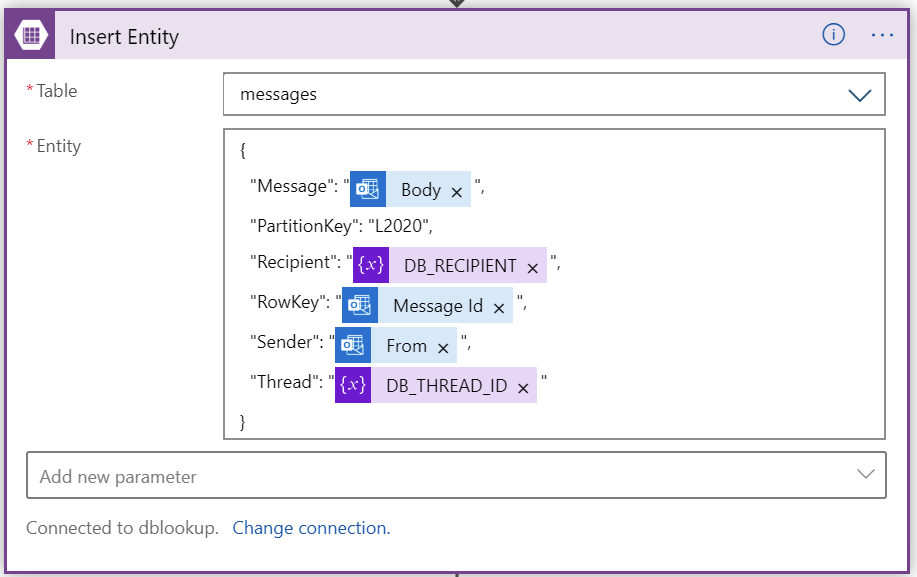

I’ll then store all of this in a database. I chose to use Azure Storage again, as it exposes the fantastic table-based structure. It allows for very ad-hoc testing, also. To insert a new entity in my table, I’m using the built-in action in Logic Apps:

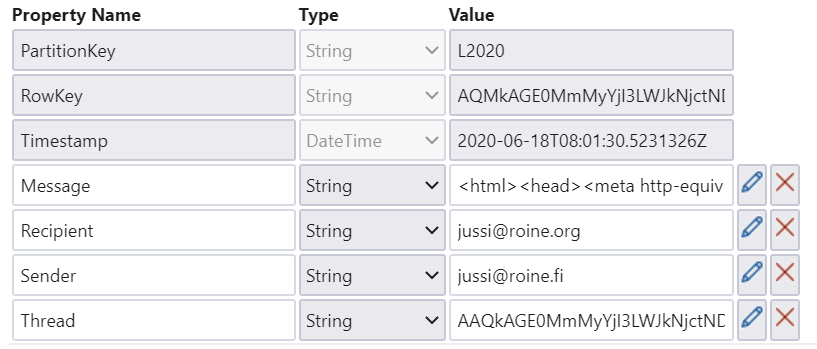

And initial email sent from [email protected] to [email protected] would look like this in the table storage:

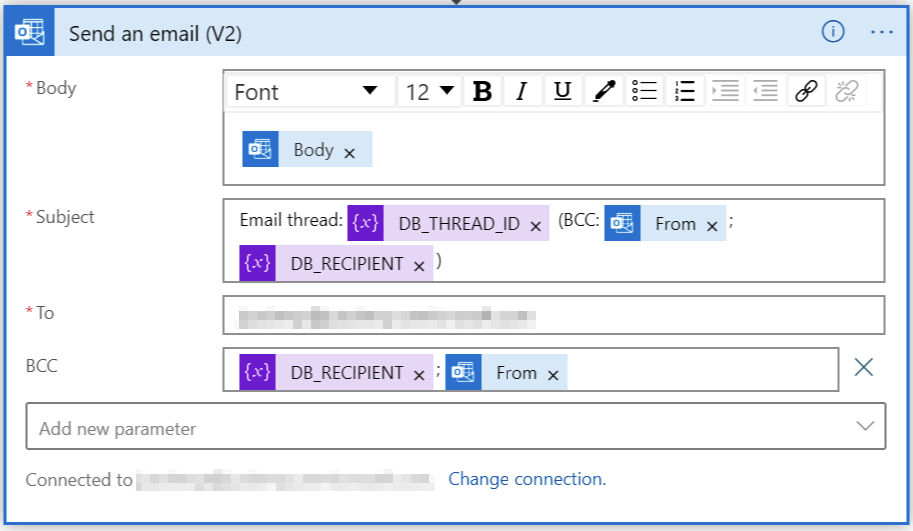

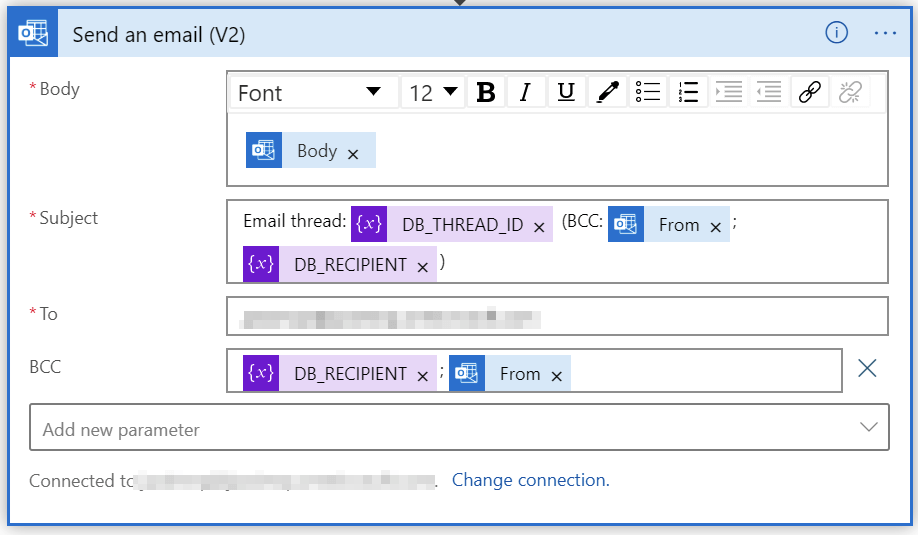

And all we need to do now is to send out the email for all participants of the thread:

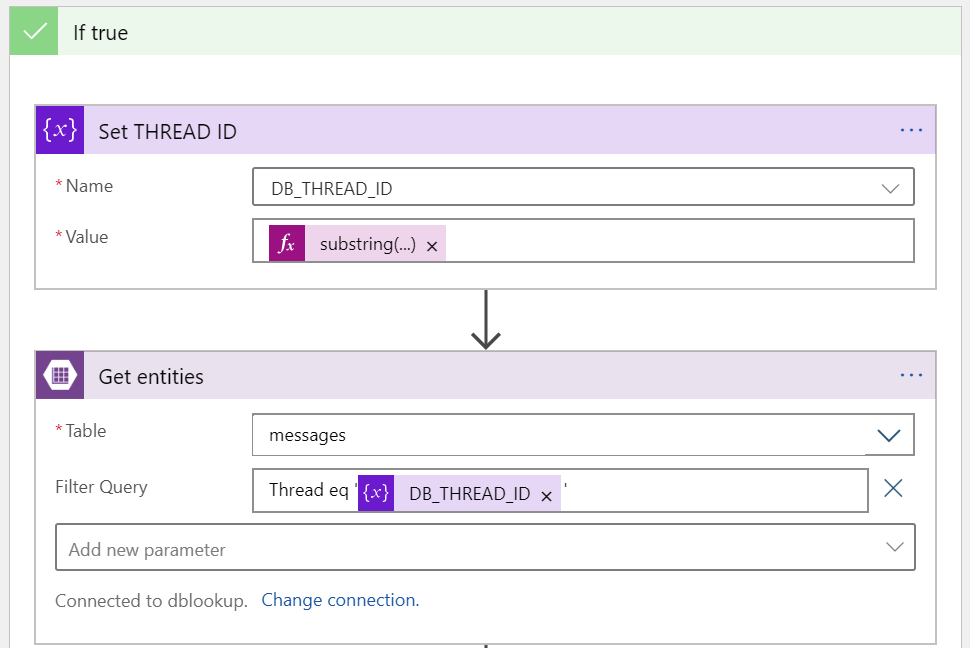

And then, focusing on the true path of the logic, when we receive a reply to an earlier thread.

First, we’ll populate the thread id for DB_THREAD_ID. This is fetched from the email subject, again with the Substring function:

substring(triggerBody()?['subject'], 11)Then, we’ll look up who the intended recipient is from the table storage. For this, we get all rows with the same thread id. We are receiving a JSON payload, that needs to be parsed and serialized. For this I’m using the Parse JSON action in Logic Apps.

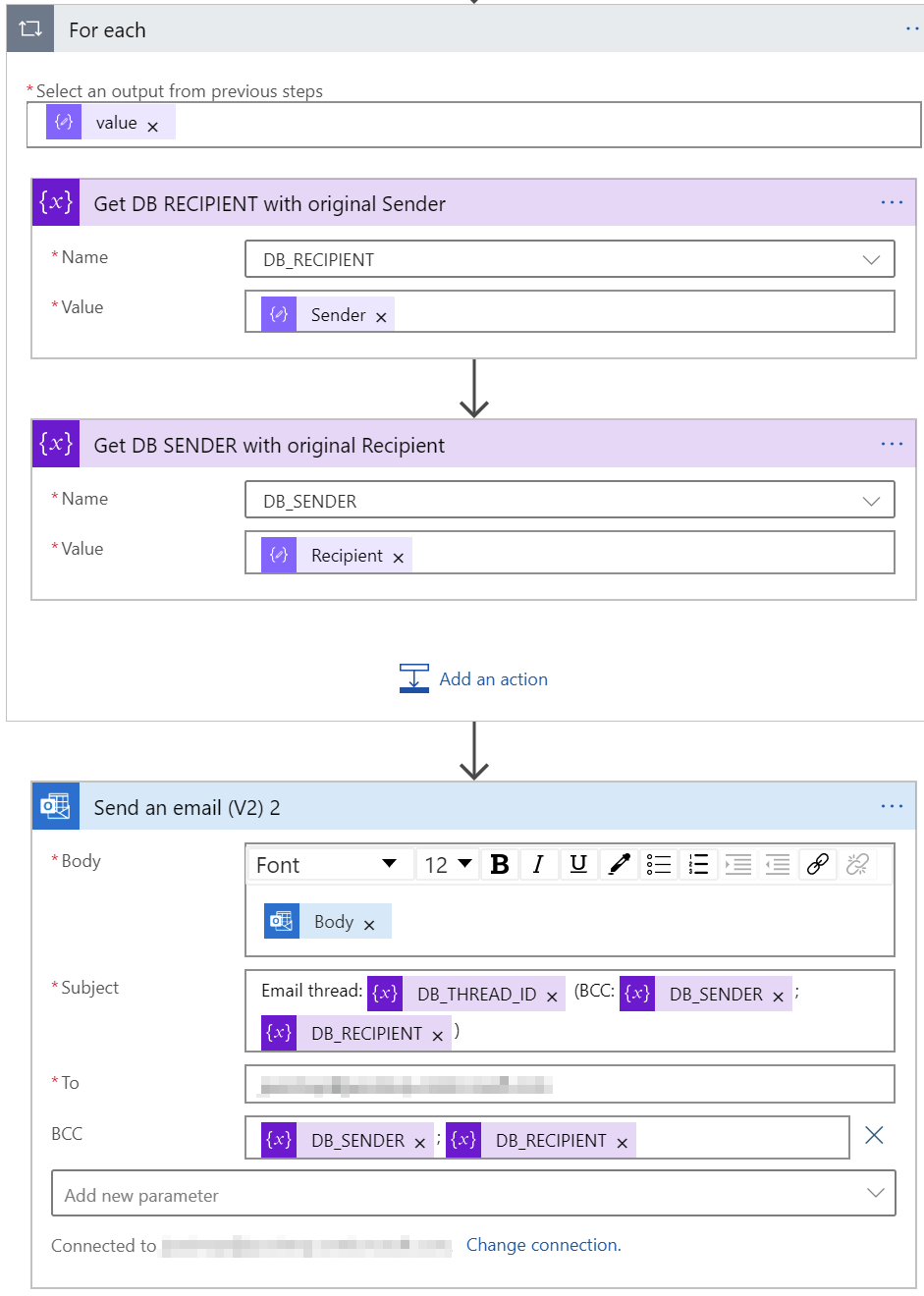

Finally, we loop through the entries and send the email out:

It gets confusing here, as we have to reverse the recipient and sender (as this is a response to an earlier email). Initially, I built the logic within the table storage, but figured it adds unnecessary complexity that can be avoided with this simple trick.

For all emails I’m using the Bcc: -field to hide the recipients. For quick troubleshooting, I’ve added the details in the subject.

Additional insights

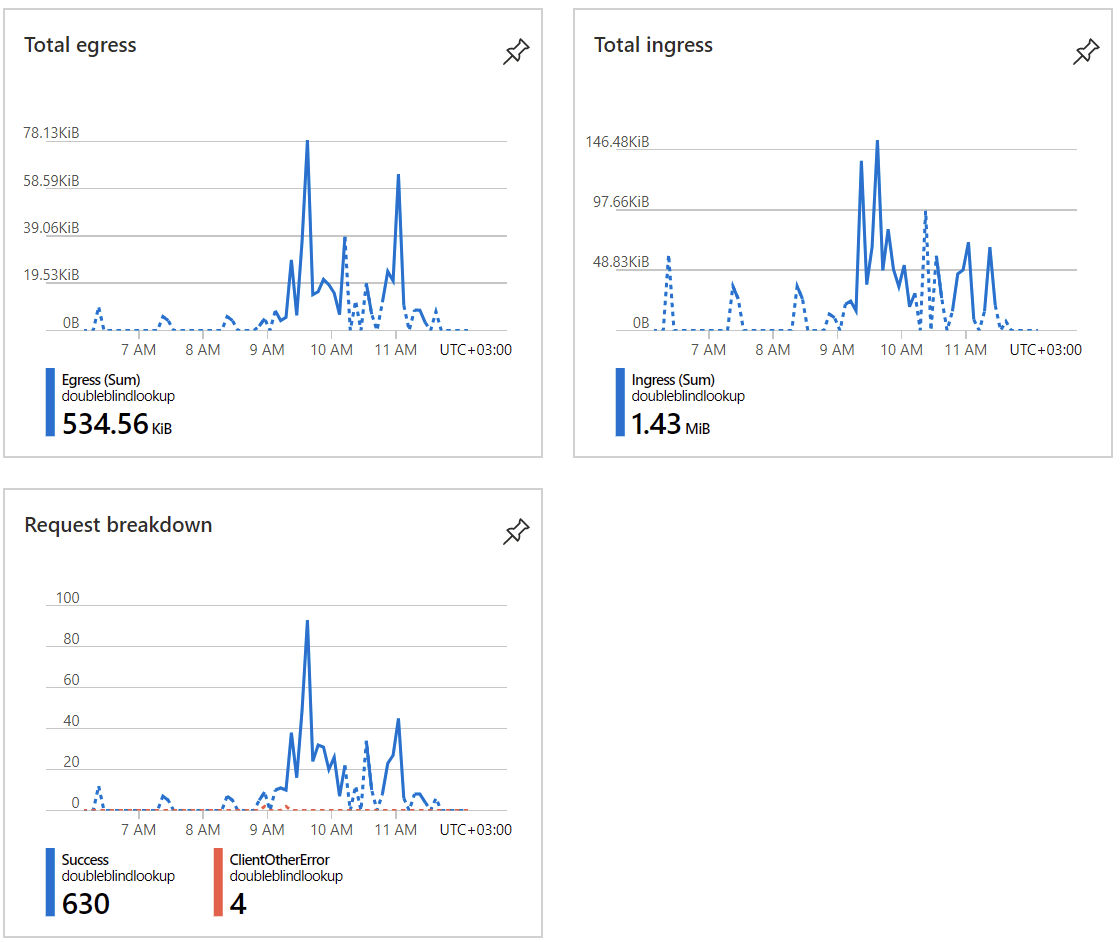

Executing the Logic App takes about a second each time, it’s blazingly fast. The storage account is also mostly idling:

Is this secure, though?

No, it’s not. All senders and recipients are stored in plain text within the table. Even if the storage account is encrypted, anyone with access to the storage account could easily extract all email communications. For that, I would use Azure Key Vault to use an encryption key or a secret, and then only store the hashes in the database.

The other omission is that anyone can trap the thread by extracting the thread id, and simply reply to all. For this, it would make sense to add a simple sanity check, that the sender must always be part of an existing thread id.

The cost of the solution is negligible. Assuming you already have an email account in Office 365, the Logic Apps consumes very little – about 0.03 €/week. It’s tied to the volume of emails, so in my relatively small testing it seems to stay very low.

But why?

Obviously, this solution isn’t production-ready, or even meant to be used as such. The beauty of using something like Logic Apps is that you can very rapidly change the logic once business requirements change. Replacing part of the logic – perhaps using an Azure Function to encrypt the identities – would be trivial.

By using a skeleton-approach like this, adds flexibility, and keeps the cost low for prototyping and implementation. Instead of using several days to implement custom code, it’s easy to try out how the implementation would work in a few hours and then build from there.

Using Azure SQL for data storage would make it slightly easier to work with the identities and data, but table storage allows for faster prototyping at the same time as you don’t have to worry about schema changes.

In conclusion

This was a fun, easy and interesting project to build. I hope this encourages others to experiment with Azure services to see how services can be built in the cloud!